While fake x-ray shaders are not very practical, they do look nice and are easy to implement. I did neither find a nice one I liked nor a good explanation, so here goes: The vertex program simple computes the screenspace … Read More

Blog

SRTM Elevation Data

I’ve been looking into free digital elevation models (DEM) and the source most mentioned is NASA’s Shuttle Radar Topography Mission (SRTM). The resolution is roughly a data point every 30 meters which would have been fine for what I wanted … Read More

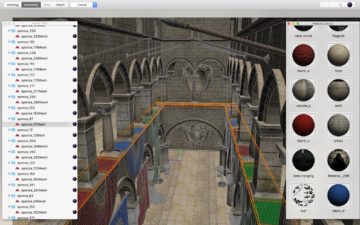

Multi-Body Mesh Editing

The Mesh-Editing Mode in Shapeflow3D finally comes to live! This is a major breakthrough that required a lot of work under the hood but opens up a lot of possibilities. Let me explain: … Read More

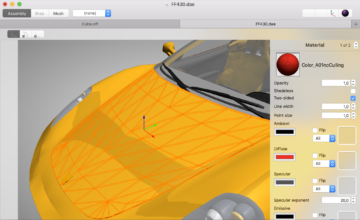

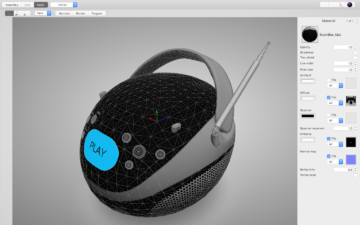

GLTF Support and Material Editing

Recently, I’ve spent some time improving the material system. The main motivation for this was to get support for Physical Based Rendering and importing files in the GLTF format. Here are the first results: … Read More

Update of SVN/GIT policies

Over the last couple of months, I got some great feedback on my article about SVN/GIT policies. How to use a VCS “correctly” seems to be a hotly debated topic in pretty much every company and there are lots misconceptions … Read More